Part 6 of 6 in the SCARCE-CXR series

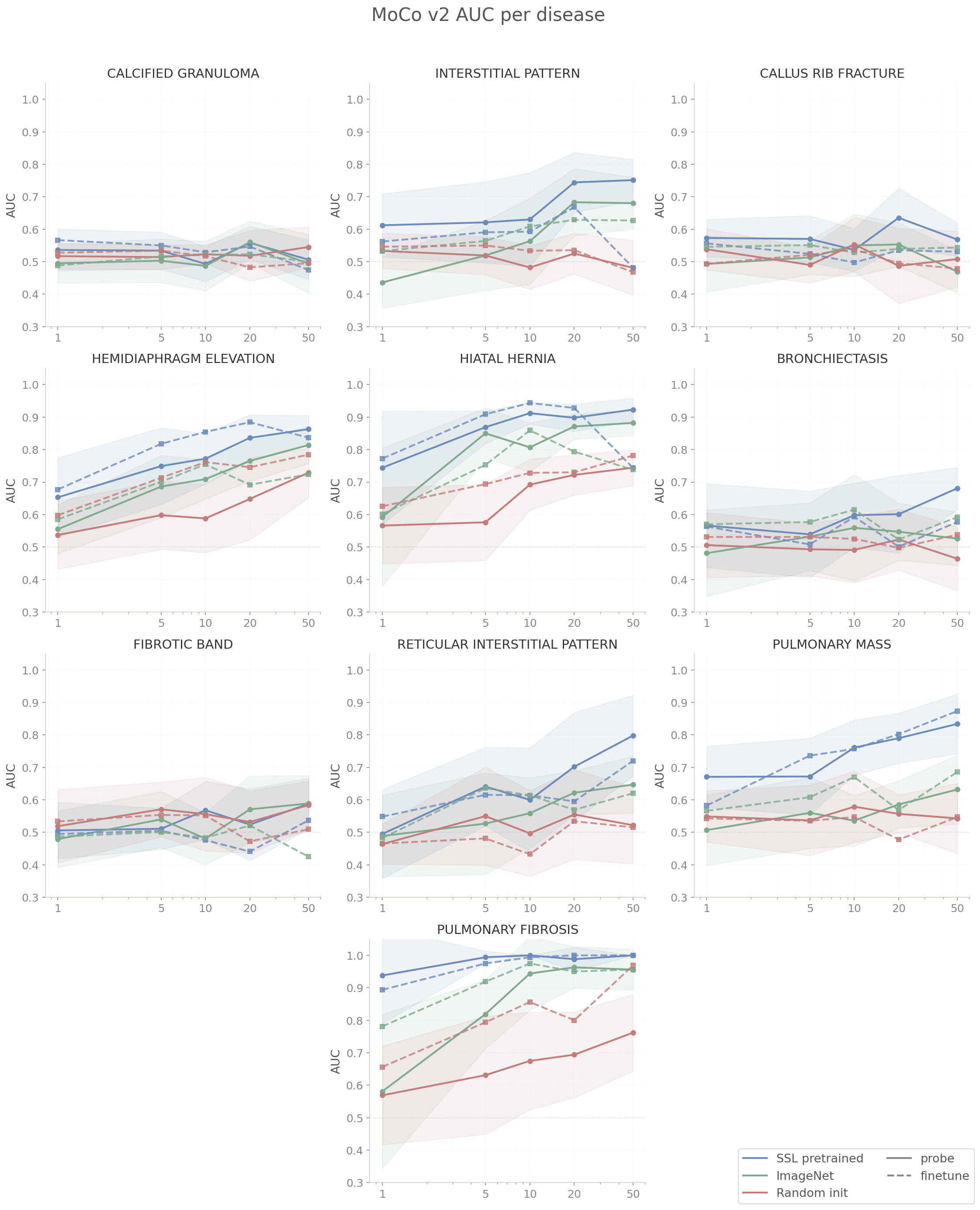

6.1 Results: MoCo v2 Probe + Finetune

All experiments were evaluted in a three-way comparison between SSL pretrained (112k NIH chest X-rays), ImageNet pretrained (standard torchvision ResNet50), and random init (sanity check).

Each (disease, shot count) combination was evaluated over 10 independent trials with random seeding. The AUC values reported are the mean across those 10 trials; the ± values in the appendix tables are the standard deviation. Full numerical results for all 10 diseases are in the Appendix: MoCo v2.

6.2 Analysis: MoCo v2

SSL pretrained beats ImageNet and random init at every shot count from 1 to 50 across both probe and finetune modes. This holds across all rare diseases averaged and is the direct payoff of contrastive learning combined with the VICReg variance term after observing dimensional collapse in our first run. The variance term costs nothing at inference time, and the downstream effect is a probe advantage of 0.056-0.118 AUC over ImageNet ranges across shot counts.

On the frozen linear probe, the SSL advantage is largest at 1-shot (+0.118 AUC). The gap narrows at 5-shot and 10-shot as ImageNet features extract more signal with more examples, then stabilizes. At 50-shot SSL probe reaches 0.751 vs ImageNet's 0.669, still a meaningful +0.082 gap. Probe AUC climbs steadily from 0.629 to 0.751 across the shot sweep, which tells us the frozen SSL features have genuine linear structure for these diseases that improve with more labeled data without backbone adaptation.

On gradient finetuning, the SSL-ImageNet gap is smaller and noisier (+0.058 at 1-shot, +0.022 at 10-shot, then bouncing back to +0.059 at 20-shot). The gap does not cleanly close. However, SSL vs random init does hold significant through 20-shot which confirms that pretraining itself matters regardless of domain. The finetune mode also reveals a ceiling that SSL finetune peaks at 0.690 (20-shot) and actually drops to 0.677 at 50-shot, falling below the probe (0.751). It appears that gradient updates over 50 binary examples introduce some sort of variance that a linear probe manages to avoid entirely.

The takeaway is if you have more than ~10 labeled examples per class and can afford gradient updates, the domain gap between ImageNet and chest x-rays matters less than you might think as finetuning adapts ImageNet features toward the domain. But at 1 to 5 labeled examples with a frozen backbone, domain-specific SSL is the right call, and even at 50-shot a frozen SSL probe outperforms gradient finetuning on MoCo v2.

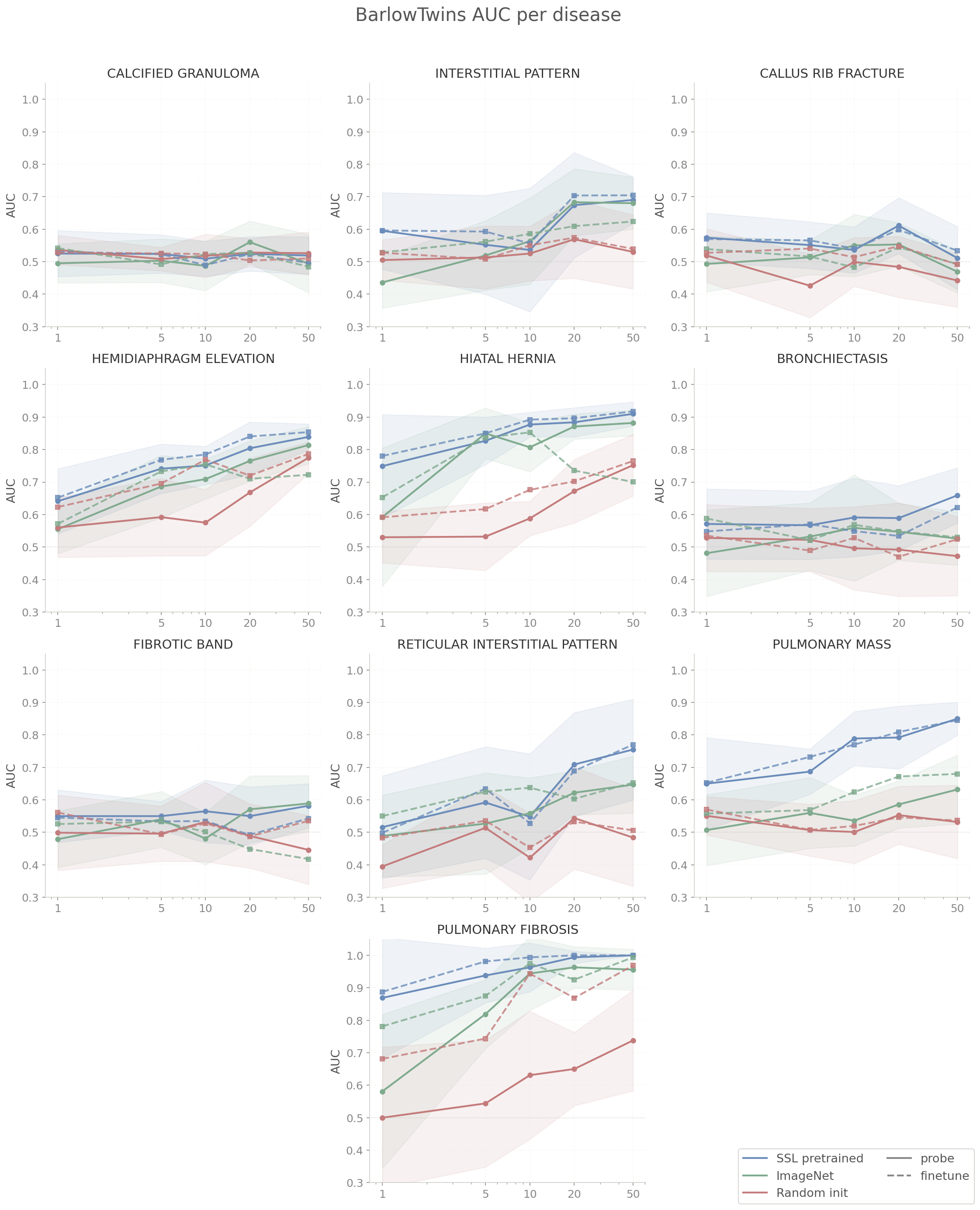

6.3 Results: BarlowTwins Probe + Finetune

Full numerical results for all 10 diseases are in the Appendix: BarlowTwins.

6.4 Analysis: BarlowTwins

BarlowTwins SSL beats ImageNet on probe for 9 of 10 diseases at 50-shot despite using a ResNet18 backbone (4x fewer parameters than ImageNet's ResNet50). Despite this, the cross-decorrelation objective encodes enough domain-specific structure to produce a consistent probe advantage. The one exception is fibrotic band at 50-shot, where ImageNet probe (0.589) marginally exceeds SSL (0.582). This might be attributed to the disease having very subtle diffuse linear opacities which require more representational capacity to separate.

Probe and finetune are essentially tied at 50-shot: 0.731 SSL probe vs 0.729 SSL finetune. This is different from MoCo v2, where gradient finetuning performed worse at 50-shot (0.677 finetune vs 0.751 probe). It appears a smaller backbone carries less risk of overfitting on 50 binary examples. Finetune highlights include hiatal hernia SSL 0.917 vs ImageNet 0.700 and hemidiaphragm elevation SSL 0.854 vs ImageNet 0.722. The overall SSL-to-ImageNet finetune gap at 50-shot (0.729 vs 0.630) is larger in absolute terms than the probe gap (0.731 vs 0.669), which suggests gradient updates boost the SSL domain advantage.

For practical consideration on smaller backbones, it seems that BarlowTwins pretraining on domain-specific data is indeed the right call. Probe-finetune parity means you can default to frozen inference without sacrificing performance, which is the simpler deployment path. The remaining open question is whether the SSL advantage would widen or stay constant with a ResNet50 BarlowTwins run as the current capacity constraint was forced upon us.

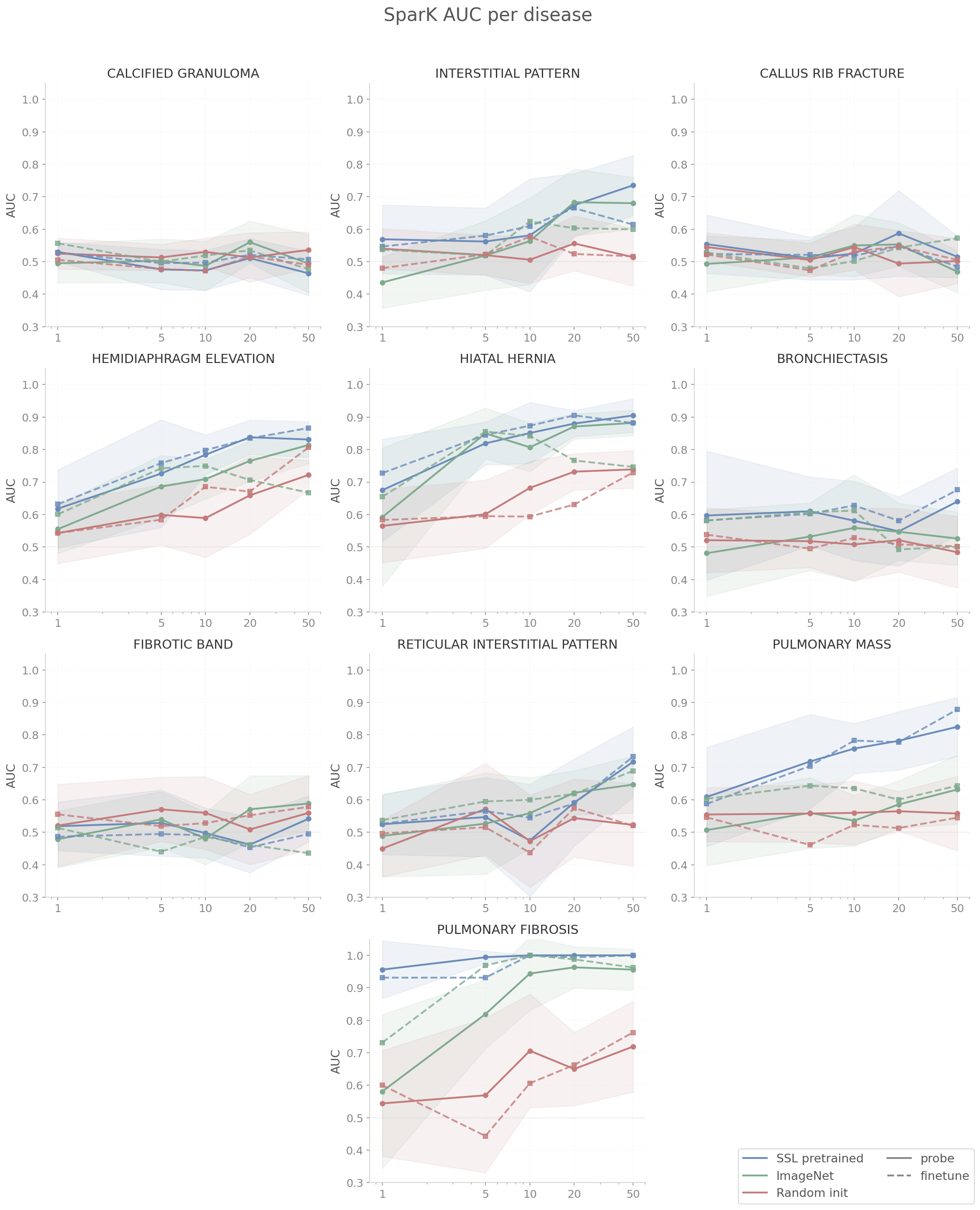

6.5 Results: SparK Probe + Finetune

Full numerical results for all 10 diseases are in the Appendix: SparK.

6.6 Analysis: SparK

SparK SSL probe is the weakest of the three methods at 50-shot (mean 0.717), below MoCo v2 (0.751) and BarlowTwins (0.731) despite using the same ResNet50 backbone as MoCo v2. On some diseases like calcified granuloma and fibrotic band at 50-shot probe, ImageNet outperforms SSL outright. It appears that SparK's reconstruction objective builds spatially coherent features suited for pixel-level prediction, not the discriminative invariances that contrastive methods better suited for classification.

The probe performs unevenly across diseases. On large-signal diseases, SSL is clearly ahead of ImageNet, but on diseases requiring subtle local texture discrimination the pattern reverses. It seems SparK's reconstruction objective with zeroed masking produces features organized around spatial coherence instead of the more fine-grained discriminative invariances we wanted SparK to focus on.

Finetune improves upon probe somewhat. The SSL finetune mean (0.714) is tied with probe (0.717) at 50-shot, but there are many improvements for individual disease: pulmonary mass SSL 0.879 vs ImageNet 0.644, hemidiaphragm elevation SSL 0.866 vs ImageNet 0.667. The features are present and gradient updates seems to activate them in a way linear probe doesn't. One notable reversal is callus rib fracture SSL finetune (0.484) falling below ImageNet (0.572), suggesting that for diseases characterized differences in rib edge texture, ImageNet's natural-image edge filters are a better prior than what reconstruction pretraining produces.

If frozen backbone inference is required, SparK is the wrong choice. MoCo v2 and BarlowTwins are strictly better at every shot count with a linear probe. However, with gradient budget and 10+ labeled examples per class, SparK finetune is as competitive as MoCo v2.

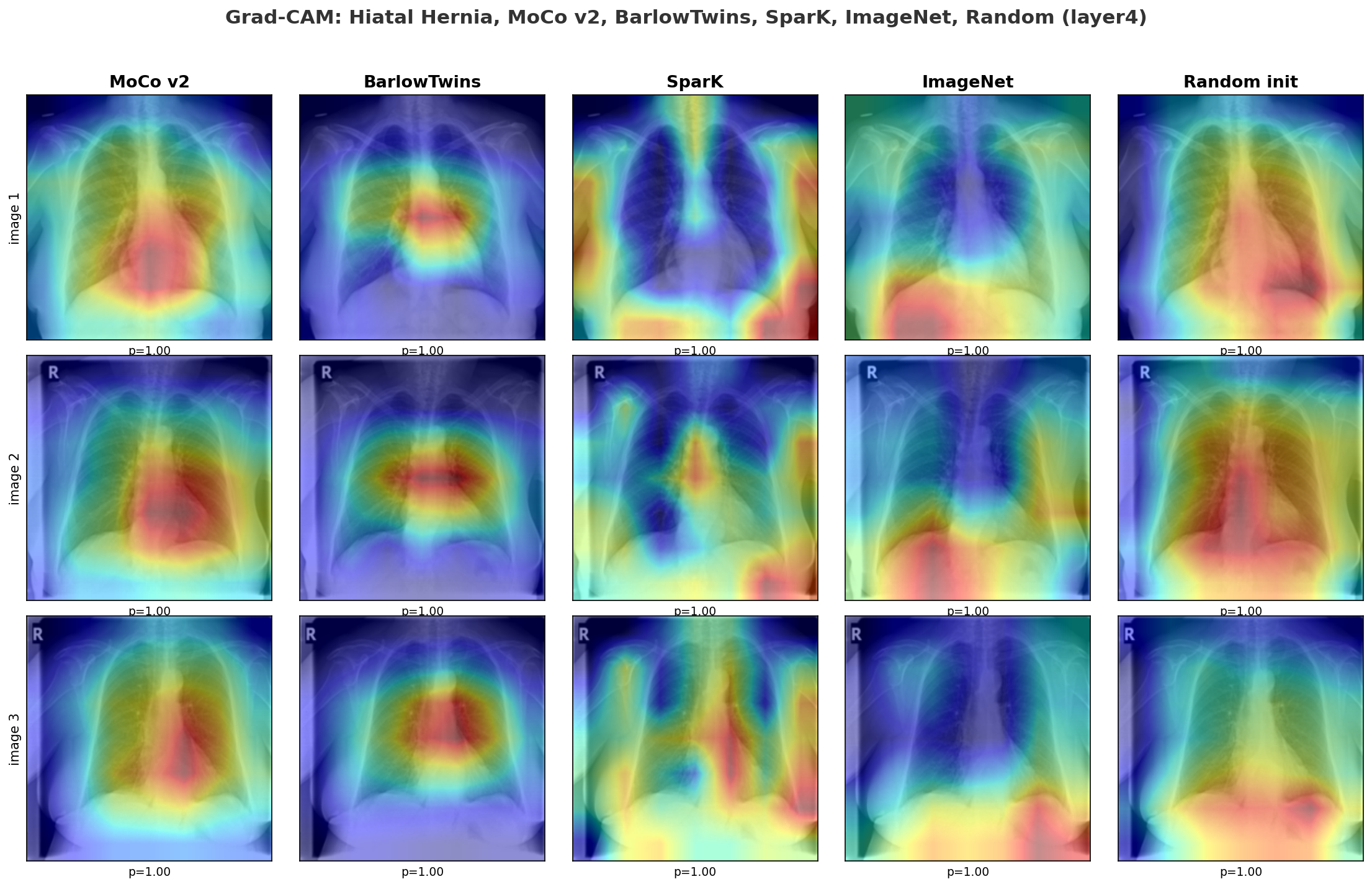

6.7 Grad-CAM Analysis

I was curious whether the SSL backbones were even looking at the right part of the image, so I ran a Grad-CAM comparison for hiatal hernia at layer4 across all five initializations: MoCo v2, BarlowTwins, SparK, ImageNet, and random init.

Both MoCo v2 and BarlowTwins focus on the retrocardiac and diaphragm region (correct anatomical location of hiatal hernia) despite never seeing a disease label during pretraining. BarlowTwins is notably more clustered over the hernia site while MoCo v2 covers the right region but with a broader activation footprint across the lower chest.

SparK shows diffuse attention around the diaphragm consistent with its reconstruction objective being learning spatially coherent texture. ImageNet, pretrained on natural images, produces scattered attention not organized around the diaphragm. Random init shows widespread, unfocused activation with no anatomical structure.

Grad-CAM confirms that SSL pretraining methods on unlabeled chest X-rays organizes feature representations around anatomical structure. The linear probe or gradient finetune did not teach the backbone where to look; the SSL pretraining already did that beforehand.

6.8 Limitations and Next Steps

Data access: I manually downloaded only 17 of the 54 PadChest folders due to time and storage constraints. Consequently, even rarer diseases like pneumothorax fell below the required labeled example threshold and were excluded. Additional infrastructure to aggregate features across institutions and hospital systems would capture significantly greater radiological diversity than current public datasets and lead to far greater advances in the field.

Architecture: I selected a ResNet50 architecture because it was appropriate for the 112,000 NIH images dataset size and onvolutional inductive biases align well with chest X-ray anatomy. However, scaling the dataset to over 600,000 images would make a Vision Transformer viable and I anticipate this scaled architecture would perform better on diffuse bilateral disease patterns spanning both lung fields. I consider vision-language self-supervised learning to be a promising future direction to explore. Prior research has demonstrated that training on paired X-ray and radiology report data significantly outperforms image-only methods on downstream tasks, and there is currently little being done to leverage this multimodal data.

Contrastive vs generative models: A definitive result of my project is the superior performance of MoCo v2 over SparK on discriminative tasks. I observed that SparK optimizes for localized reconstruction while MoCo optimizes for global invariances. A logical extension I propose is integrating these approaches by applying a contrastive loss to representations derived from partially masked inputs. This integration would require the model to reconstruct missing regions while maintaining augmentation invariance. Evaluating this combined approach against a pure contrastive baseline at a medical imaging scale would be an interesting empirical experiment.

Training diagnostics: I developed collapse_monitor.py only after the initial MoCo v1 dimensional collapse. In the future, I envision a more robust implementation that monitors effective rank during pretraining and dynamically adjusts regularization to maintain target dimensionality. The GradCAM analysis here is also purely qualitative as I visually compared activation maps across five initializations for one disease. A more rigorous follow-up would use a quantitative localization score (perhaps intersecting each GradCAM heatmap with a radiologist-defined anatomical mask and measuring what fraction of activation falls within the clinically relevant region) across all diseases and initializations. That would give a clearer answer of how much SSL pretraining actually improves anatomical attention precision and whether the improvement is consistent across disease types or specific to findings with large global texture changes.

6.9 Closing Thoughts

This started as a RandomForest getting 55% accuracy on a chest X-ray task and led to treating a label-scarce problem with standard supervised learning. Pretraining on unlabeled domain-specific data gave consistently good results even when it only saw one labelled image. The open problems from here are more on the data side and less on the modeling side. If you or your team is working on hard problems with constrained data or limited compute, I'd love to hear about it. The full code is on GitHub.

7. Appendix: Full Results Tables

50-shot AUC (mean ± std across 10 trials) for all 10 diseases, probe and finetune, across all three init strategies.

MoCo v2

| Disease | Probe | Finetune | ||||

|---|---|---|---|---|---|---|

| SSL | ImageNet | Random | SSL | ImageNet | Random | |

| calcified granuloma | 0.506±0.061 | 0.494±0.090 | 0.545±0.063 | 0.474±0.043 | 0.499±0.065 | 0.495±0.077 |

| interstitial pattern | 0.751±0.064 | 0.680±0.080 | 0.481±0.084 | 0.482±0.139 | 0.627±0.087 | 0.468±0.113 |

| callus rib fracture | 0.568±0.051 | 0.469±0.065 | 0.508±0.086 | 0.531±0.037 | 0.544±0.087 | 0.479±0.078 |

| hemidiaphragm elevation | 0.863±0.042 | 0.814±0.057 | 0.729±0.076 | 0.836±0.131 | 0.724±0.076 | 0.784±0.044 |

| hiatal hernia | 0.923±0.035 | 0.882±0.039 | 0.744±0.055 | 0.744±0.167 | 0.738±0.141 | 0.781±0.068 |

| bronchiectasis | 0.681±0.064 | 0.526±0.082 | 0.464±0.098 | 0.577±0.180 | 0.592±0.105 | 0.538±0.124 |

| fibrotic band | 0.588±0.079 | 0.589±0.086 | 0.584±0.074 | 0.537±0.126 | 0.425±0.121 | 0.510±0.083 |

| reticular interstitial pattern | 0.798±0.124 | 0.647±0.088 | 0.522±0.119 | 0.720±0.159 | 0.620±0.158 | 0.516±0.127 |

| pulmonary mass | 0.834±0.092 | 0.632±0.106 | 0.543±0.108 | 0.874±0.049 | 0.686±0.139 | 0.547±0.081 |

| pulmonary fibrosis | 1.000±0.000 | 0.956±0.063 | 0.762±0.118 | 1.000±0.000 | 0.956±0.079 | 0.969±0.058 |

| average | 0.751 | 0.669 | 0.588 | 0.678 | 0.641 | 0.609 |

BarlowTwins

| Disease | Probe | Finetune | ||||

|---|---|---|---|---|---|---|

| SSL | ImageNet | Random | SSL | ImageNet | Random | |

| calcified granuloma | 0.519±0.058 | 0.494±0.090 | 0.526±0.066 | 0.496±0.071 | 0.484±0.043 | 0.510±0.087 |

| interstitial pattern | 0.690±0.072 | 0.680±0.080 | 0.530±0.114 | 0.704±0.068 | 0.624±0.078 | 0.539±0.055 |

| callus rib fracture | 0.511±0.097 | 0.469±0.065 | 0.442±0.082 | 0.534±0.048 | 0.492±0.091 | 0.491±0.054 |

| hemidiaphragm elevation | 0.839±0.040 | 0.814±0.057 | 0.775±0.049 | 0.854±0.042 | 0.722±0.062 | 0.787±0.046 |

| hiatal hernia | 0.910±0.037 | 0.882±0.039 | 0.752±0.095 | 0.917±0.046 | 0.700±0.172 | 0.765±0.080 |

| bronchiectasis | 0.659±0.085 | 0.526±0.082 | 0.472±0.122 | 0.622±0.065 | 0.530±0.173 | 0.524±0.130 |

| fibrotic band | 0.582±0.068 | 0.589±0.086 | 0.446±0.106 | 0.543±0.075 | 0.418±0.134 | 0.536±0.087 |

| reticular interstitial pattern | 0.755±0.155 | 0.647±0.088 | 0.484±0.150 | 0.770±0.094 | 0.653±0.152 | 0.506±0.154 |

| pulmonary mass | 0.850±0.051 | 0.632±0.106 | 0.532±0.113 | 0.845±0.061 | 0.680±0.149 | 0.536±0.092 |

| pulmonary fibrosis | 1.000±0.000 | 0.956±0.063 | 0.738±0.155 | 1.000±0.000 | 0.994±0.019 | 0.969±0.075 |

| average | 0.732 | 0.669 | 0.570 | 0.729 | 0.630 | 0.616 |

SparK

| Disease | Probe | Finetune | ||||

|---|---|---|---|---|---|---|

| SSL | ImageNet | Random | SSL | ImageNet | Random | |

| calcified granuloma | 0.464±0.068 | 0.494±0.090 | 0.536±0.056 | 0.507±0.043 | 0.476±0.040 | 0.490±0.072 |

| interstitial pattern | 0.735±0.092 | 0.680±0.080 | 0.514±0.089 | 0.614±0.128 | 0.600±0.127 | 0.517±0.057 |

| callus rib fracture | 0.515±0.064 | 0.469±0.065 | 0.502±0.069 | 0.484±0.069 | 0.572±0.048 | 0.505±0.110 |

| hemidiaphragm elevation | 0.831±0.055 | 0.814±0.057 | 0.722±0.045 | 0.866±0.090 | 0.667±0.122 | 0.807±0.033 |

| hiatal hernia | 0.905±0.052 | 0.882±0.039 | 0.739±0.058 | 0.882±0.080 | 0.747±0.091 | 0.729±0.072 |

| bronchiectasis | 0.640±0.103 | 0.526±0.082 | 0.484±0.110 | 0.676±0.115 | 0.501±0.104 | 0.502±0.115 |

| fibrotic band | 0.542±0.071 | 0.589±0.086 | 0.560±0.115 | 0.495±0.088 | 0.436±0.121 | 0.579±0.099 |

| reticular interstitial pattern | 0.717±0.108 | 0.647±0.088 | 0.523±0.128 | 0.733±0.114 | 0.689±0.152 | 0.520±0.133 |

| pulmonary mass | 0.825±0.091 | 0.632±0.106 | 0.558±0.115 | 0.879±0.078 | 0.644±0.114 | 0.545±0.112 |

| pulmonary fibrosis | 1.000±0.000 | 0.956±0.063 | 0.719±0.140 | 1.000±0.000 | 0.963±0.113 | 0.762±0.083 |

| average | 0.717 | 0.669 | 0.586 | 0.714 | 0.630 | 0.596 |